Google's Tensor inside of Pixel 6, Pixel 6 Pro: A Look into Performance & Efficiency

by Andrei Frumusanu on November 2, 2021 8:00 AM EST- Posted in

- Mobile

- Smartphones

- SoCs

- Pixel 6

- Pixel 6 Pro

- Google Tensor

Memory Subsystem & Latency

Usually, the first concern of a SoC design, is that it requires that it performs well in terms of its data fabric and properly giving its IP blocks access to the caches and DRAM of the system within good latency metrics, as latency, especially on the CPU side, is directly proportional to the end-result performance under many workloads.

The Google Tensor, is both similar, but different to the Exynos chips in this regard. Google does however fundamentally change how the internal fabric of the chip is set up in terms of various buses and interconnects, so we do expect some differences.

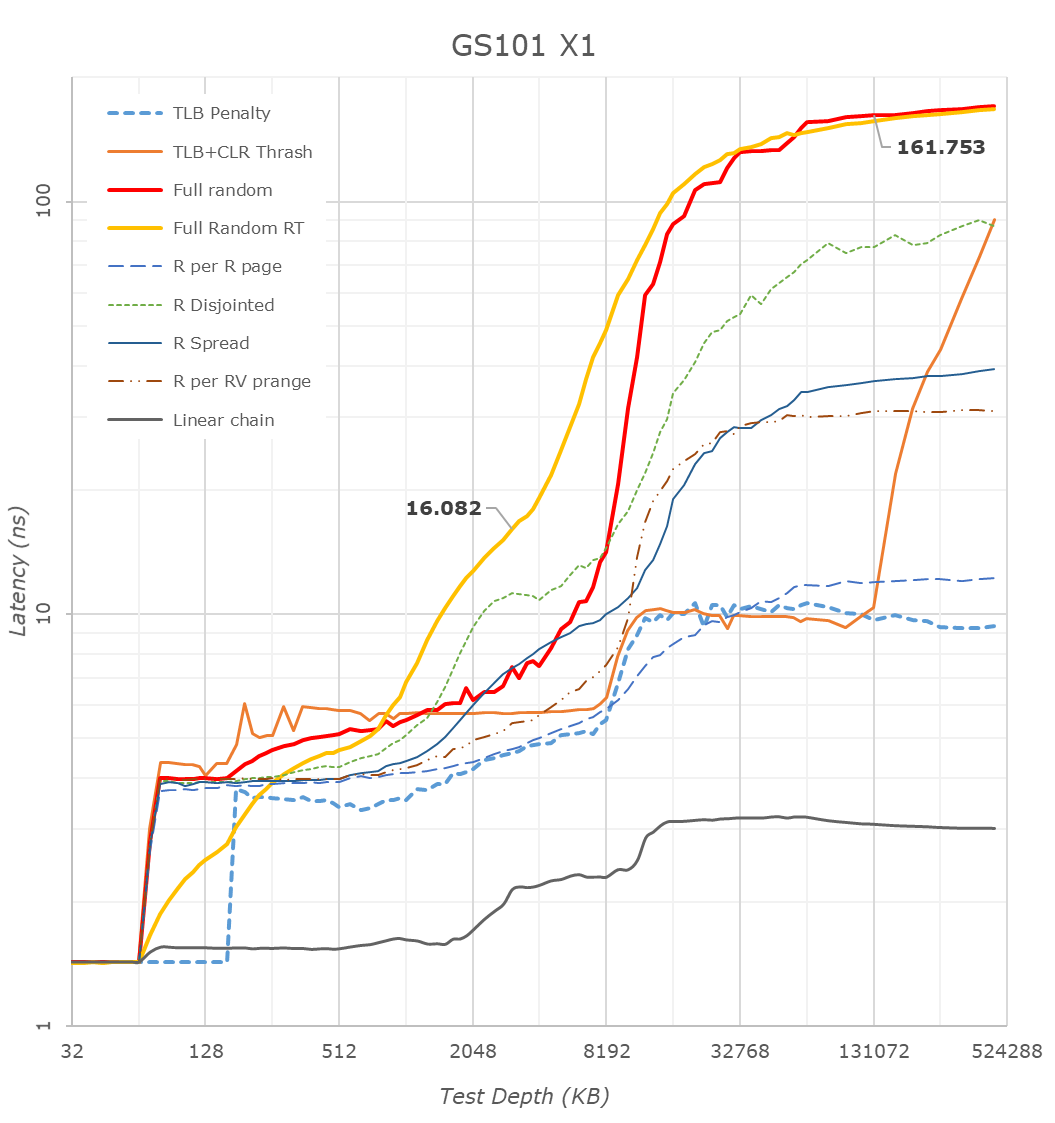

First off, we have to mention that many of the latency patterns here are still quite a broken due to the new Arm temporal prefetchers that were introduced with the Cortex-X1 and A78 series CPUs – please just pay attention to the orange “Full Random RT” curve which bypasses these.

There’s a couple of things to see here, let’s start at the CPU side, where we see the X1 cores of the Tensor chip being configured with 1MB of L2, which comes in contrast with the smaller 512KB of the Exynos 2100, but in line with what we see on the Snapdragon 888.

The second thing to note, is that it looks like the Tensor’s DRAM latency isn’t good, and showcases a considerable regression compared to the Exynos 2100, which in turn was quite worse off than the Snapdragon 888. While the measurements are correct in what they’re measuring, the problem is a bit more complex in the way that Google is operating the memory controllers on the Google Tensor. For the CPUs, Google is tying the MCs and DRAM speed based on performance counters of the CPUs and the actual workload IPC as well as memory stall % of the cores, which is different to the way Samsung runs things which are more transactional utilisation rate of the memory controllers. I’m not sure of the high memory latency figures of the CPUs are caused by this, or rather by simply having a higher latency fabric within the SoC as I wasn’t able to confirm the runtime operational frequencies of the memory during the tests on this unrooted device. However, it’s a topic which we’ll see brought up a few more times in the next few pages, especially on the CPU performance evaluation of things.

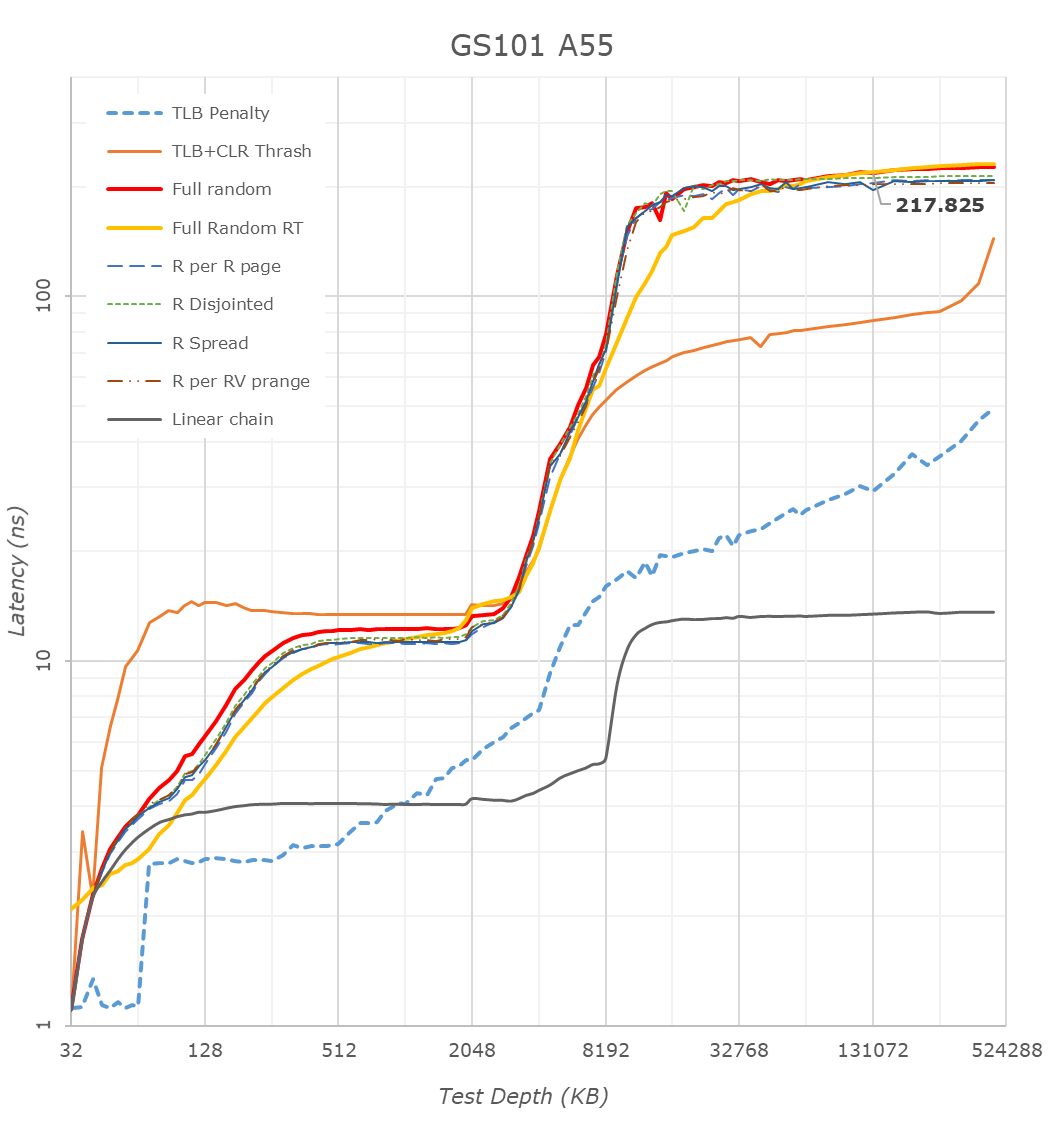

The Cortex-A76 view of things looks more normal in terms of latencies as things don’t get impacted by the temporal prefetchers, still, the latencies here are significantly higher than on competitor SoCs, on all patterns.

What I found weird, was that the L3 latencies of the Tensor SoC also look to be quite high, above that of the Exynos 2100 and Snapdragon 888 by quite a noticeable margin. I noted that one weird thing about the Tensor SoC, is that Google didn’t give the DSU and the L3 cache of the CPU cluster a dedicated clock plane, rather tying it to the frequency of the Cortex-A55 cores. The odd thing here is that, even if the X1 or A76 cores are under full load, the A55 cores as well as the L3 are still running at lower frequencies. The same scenario on the Exynos or Snapdragon chip would raise the frequency of the L3. This behaviour and aspect of the chip can be confirmed by running at dummy load on the Cortex-A55 cores in order to drive the L3 higher, which improves the figures on both the X1 and A76 cores.

The system level cache is visible in the latency hump starting at around 11-13MB (1MB L2 + 4MB L3 + 8MB SLC). I’m not showing it in the graphs here, but memory bandwidth on normal accesses on the Google chip is also slower than on the Exynos, but I think I do see more fabric bandwidth when doing things such as modifying individual cache lines – one of the reasons I think the SLC architecture is different than what’s on the Exynos 2100.

The A55 cores on the Google Tensor have 128KB of L2 cache. What’s interesting here is that because the L3 is on the same clock plane as the Cortex-A55 cores, and it runs at the same higher frequencies, is that the Tensor’s A55s have the lowest L3 latencies of the all the SoCs, as they do without an asynchronous clock bridge between the blocks. Like on the Exynos, there’s some sort of increase at 2MB, something we don’t see on the Snapdragon 888, and I think is related to how the L3 is implemented on the chips.

Overall, the Tensor SoC is quite different here in how it’s operated, and there’s some key behaviours that we’ll have to keep in mind for the performance evaluation part.

108 Comments

View All Comments

sharath.naik - Saturday, November 13, 2021 - link

Looks like you both do not value to concept of understanding the topic before responding, So pay attention this time. I am talking about hardware binning.. not software binning like every one else does for low light. Hardware binning means the sensors NEVER produce anything other than 12MP. Do both of you understand what NEVER means? Never means these sensors are NEVER capable of 50MP or 48MP. NEVER means Pixel 3x zoom are 1.3MP low resolution images(Yes that is all your portrait modes). NEVER means at 10x Pixel images are down to 2.5MP.Next time Both of you learn to read, learn to listen before responding like you do.

meacupla - Tuesday, November 2, 2021 - link

IDK about you, but livetranslation is very useful if you have to interact with people who can't speak a language you can speak fluently.BigDH01 - Tuesday, November 2, 2021 - link

Agreed this is useful in those situations where it's needed but those situations probably aren't very common for those of us that don't do a lot of international travel. In local situations with non-native English speakers typically enough English is still known to "get by."Justwork - Friday, November 5, 2021 - link

Not always. My in-law just moved in who knows no English and I barely speak their native language. We've always relief on translation apps to get by. When I got the P6 this weekend, both our lives just got dramatically better. The experience is just so much more integrated and way faster. No more spending minutes pausing while we type responses. The live translate is literally life changing because of how improved it is. I know others in my situation, it's not that uncommon and they are very excited for this phone because of this one capability.name99 - Tuesday, November 2, 2021 - link

Agreed, but, in the context of the review:- does this need to run locally. (My guess is yes, that non-local is noticeably slower, and requires an internet connection you may not have.)

- does anyone run it locally (no idea)

- is the constraint on running it locally and well the amount of inference HW? Or the model size? or something else like CPU? ie does Tensor the chip actually do this better than QC (or Apple, or, hell, an intel laptop)?

SonOfKratos - Tuesday, November 2, 2021 - link

Wow. You know what the fact that the phone has a modem to compete with Qualcomm for the first time in the US is good enough for me. The more competition the better, yes Qualcomm is still collecting royalties for their parents but who cares.Alistair - Tuesday, November 2, 2021 - link

That's a lot of words to basically state the truth, Tensor is a cheap chip, nothing new here. Next. I'm waiting for Samsung + AMD.Alistair - Tuesday, November 2, 2021 - link

phones are cheap too, but too expensiveWrs - Tuesday, November 2, 2021 - link

Surprisingly large for being cheap. Dual X1's with 1 MB cache, 20 Mali cores. So many inefficiencies just to get a language translation block. As if both the engineers and the bean counters fell asleep. To be fair, it's a first-gen phone SoC for Google.Idk if I regard Samsung + AMD as much better, though. Once upon a time AMD had a low-power graphics department. They sold that off to Qualcomm over a decade ago. So this would probably be AMD's first gen on phones too. And the ARM X1 core remains a problem. It's customizable but the blueprint seems to throw efficiency out the window for performance. You don't want any silicon in a phone that throws efficiency out the window.

Alistair - Wednesday, November 3, 2021 - link

Will we still be on X1 next year? I hope not. I'm hoping next year is finally the boost that Android needs for SoCs.