Google's Tensor inside of Pixel 6, Pixel 6 Pro: A Look into Performance & Efficiency

by Andrei Frumusanu on November 2, 2021 8:00 AM EST- Posted in

- Mobile

- Smartphones

- SoCs

- Pixel 6

- Pixel 6 Pro

- Google Tensor

Memory Subsystem & Latency

Usually, the first concern of a SoC design, is that it requires that it performs well in terms of its data fabric and properly giving its IP blocks access to the caches and DRAM of the system within good latency metrics, as latency, especially on the CPU side, is directly proportional to the end-result performance under many workloads.

The Google Tensor, is both similar, but different to the Exynos chips in this regard. Google does however fundamentally change how the internal fabric of the chip is set up in terms of various buses and interconnects, so we do expect some differences.

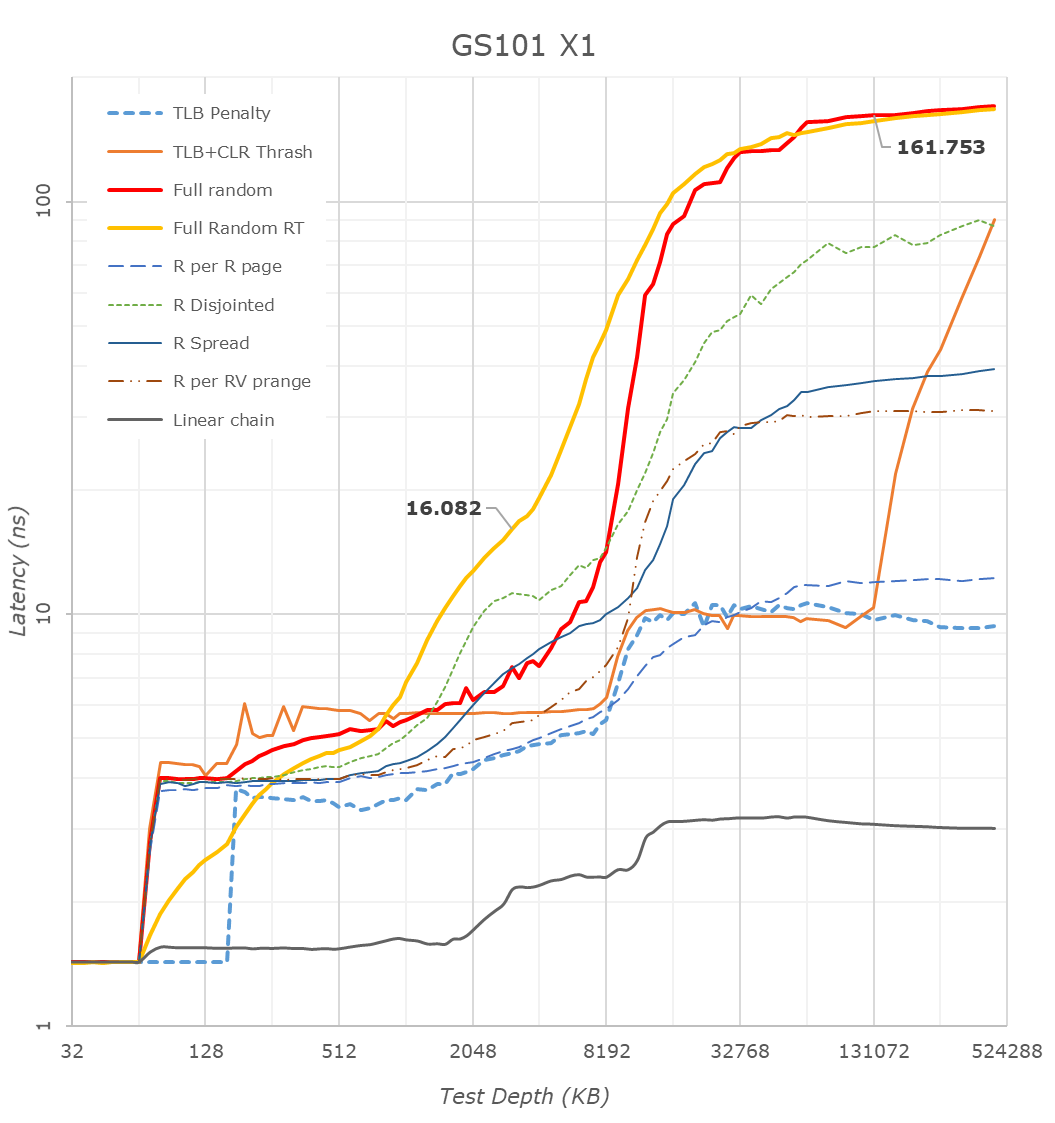

First off, we have to mention that many of the latency patterns here are still quite a broken due to the new Arm temporal prefetchers that were introduced with the Cortex-X1 and A78 series CPUs – please just pay attention to the orange “Full Random RT” curve which bypasses these.

There’s a couple of things to see here, let’s start at the CPU side, where we see the X1 cores of the Tensor chip being configured with 1MB of L2, which comes in contrast with the smaller 512KB of the Exynos 2100, but in line with what we see on the Snapdragon 888.

The second thing to note, is that it looks like the Tensor’s DRAM latency isn’t good, and showcases a considerable regression compared to the Exynos 2100, which in turn was quite worse off than the Snapdragon 888. While the measurements are correct in what they’re measuring, the problem is a bit more complex in the way that Google is operating the memory controllers on the Google Tensor. For the CPUs, Google is tying the MCs and DRAM speed based on performance counters of the CPUs and the actual workload IPC as well as memory stall % of the cores, which is different to the way Samsung runs things which are more transactional utilisation rate of the memory controllers. I’m not sure of the high memory latency figures of the CPUs are caused by this, or rather by simply having a higher latency fabric within the SoC as I wasn’t able to confirm the runtime operational frequencies of the memory during the tests on this unrooted device. However, it’s a topic which we’ll see brought up a few more times in the next few pages, especially on the CPU performance evaluation of things.

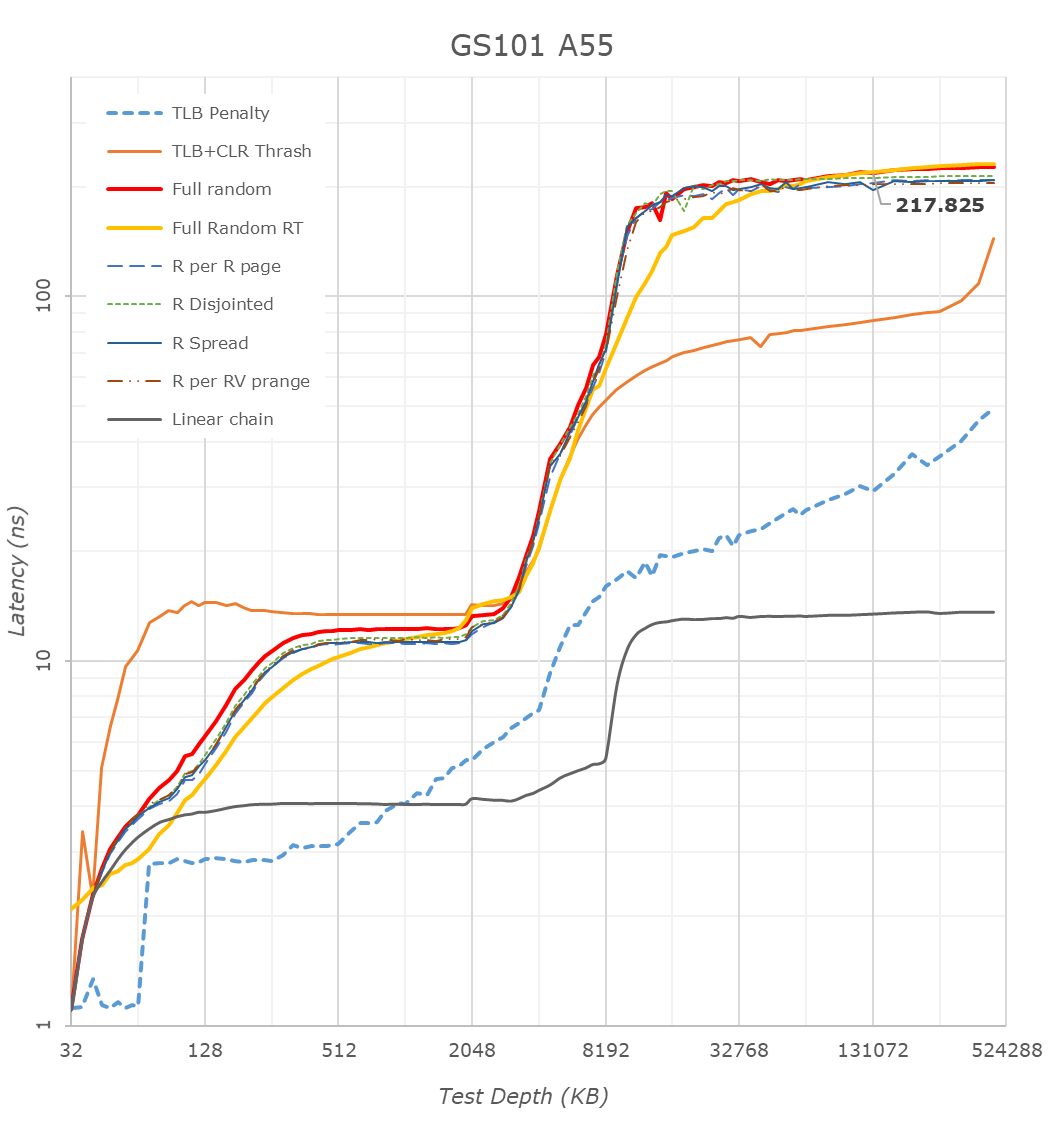

The Cortex-A76 view of things looks more normal in terms of latencies as things don’t get impacted by the temporal prefetchers, still, the latencies here are significantly higher than on competitor SoCs, on all patterns.

What I found weird, was that the L3 latencies of the Tensor SoC also look to be quite high, above that of the Exynos 2100 and Snapdragon 888 by quite a noticeable margin. I noted that one weird thing about the Tensor SoC, is that Google didn’t give the DSU and the L3 cache of the CPU cluster a dedicated clock plane, rather tying it to the frequency of the Cortex-A55 cores. The odd thing here is that, even if the X1 or A76 cores are under full load, the A55 cores as well as the L3 are still running at lower frequencies. The same scenario on the Exynos or Snapdragon chip would raise the frequency of the L3. This behaviour and aspect of the chip can be confirmed by running at dummy load on the Cortex-A55 cores in order to drive the L3 higher, which improves the figures on both the X1 and A76 cores.

The system level cache is visible in the latency hump starting at around 11-13MB (1MB L2 + 4MB L3 + 8MB SLC). I’m not showing it in the graphs here, but memory bandwidth on normal accesses on the Google chip is also slower than on the Exynos, but I think I do see more fabric bandwidth when doing things such as modifying individual cache lines – one of the reasons I think the SLC architecture is different than what’s on the Exynos 2100.

The A55 cores on the Google Tensor have 128KB of L2 cache. What’s interesting here is that because the L3 is on the same clock plane as the Cortex-A55 cores, and it runs at the same higher frequencies, is that the Tensor’s A55s have the lowest L3 latencies of the all the SoCs, as they do without an asynchronous clock bridge between the blocks. Like on the Exynos, there’s some sort of increase at 2MB, something we don’t see on the Snapdragon 888, and I think is related to how the L3 is implemented on the chips.

Overall, the Tensor SoC is quite different here in how it’s operated, and there’s some key behaviours that we’ll have to keep in mind for the performance evaluation part.

108 Comments

View All Comments

Alistair - Tuesday, November 2, 2021 - link

It's very irritating how slow Android SOCs are. I'll just keep on waiting. Won't give up my existing Android phone until actual performance improvements arrive. Hopefully Samsung x AMD will make a difference next year.Speedfriend - Thursday, November 4, 2021 - link

Looking at the excellent battery life of the iPhone 13 (which I am currently waiting for as my work phone) does iPhone till kill suspend background tasks. When I used to day trade, my iPhone would stop prices updating in the background, very annoying when I would flick to the app to check prices and unwittingly see prices hours old.ksec - Tuesday, November 2, 2021 - link

Av1 hardware decoder having design problem again?Where have I heard of this before?

Peskarik - Tuesday, November 2, 2021 - link

preplanned obsolescencetuxRoller - Tuesday, November 2, 2021 - link

I wonder if Google is using the panfrost open source driver for Mali? That might account for some of the performance issues.TheinsanegamerN - Tuesday, November 2, 2021 - link

Seems to me based on thermals that the pixel 6/pro suffer from thermal throttling, and thus have power power budgets, then they should have given the internal hardware, leading to poor results.Makes me wonder what one of these chips could do in a better designed chassis.

name99 - Tuesday, November 2, 2021 - link

I'd like to ask a question that's not rooted in any particular company, whether it's x86, Google, or Apple, namely: how different *really* are all these AI acceleration tools, and what sort of timelines can we expect for what?Here are the kinda use cases I'm aware of:

For vision we have

- various photo improvement stuff (deblur, bokeh, night vision etc). Works at a level people consider OK, getting better every year.

Presumably the next step is similar improvement applied to video.

- recognition. Objects, OCR. I'd say the Apple stuff is "acceptable". The OCR is genuinely useful (eg search for "covid" will bring up a scan of my covid card without me ever having tagged it or whatever), and the object recognition gets better every year. Basics like "cat" or person recognition work well, the newest stuff (like recognizing plant species) seems to be accurate, but the current UI is idiotic and needs to be fixed (irrelevant for our purposes).

On the one hand, you can say Google has had this for years. On the other hand my practical experience with Google Lens and recognition is that the app has been through so many rounds of "it's on iOS, no it isn't; it's available in the browser, no it isn't" that I've lost all interest in trying to figure out where it now lives when I want that sort of functionality. So I've no idea whether it's better than Apple along any important dimensions.

For audio we have

- speech recognition, and speech synth. Both of these have been moved over the years from Apple servers to Apple HW, and honestly both are now remarkably good. The only time speech recognition serves me poorly is when there is a mic issue (like my watch is covered by something, or I'm using the mic associated with my car head unit, not the iPhone mic).

You only realize how impressive this is when you hear voice synth from older platforms, like the last time I used Tesla maybe 3 yrs ago the voice synth was noticeably more grating and "synthetic" than Apple. I assume Google is at essentially Apple level -- less HW and worse mics to throw at the problem, but probably better models.

- maybe there's some AI now powering Shazam? Regardless it always worked well, but gets better and faster every year.

For misc we have

- various pose/motion recognition stuff. Apple does this for recognizing types of exercises, or handwashing, and it works fine. I don't know if Google does anything similar. It does need a watch. Not clear how much further this can go. You can fantasize about weird gesture UIs, but I'm not sure the world cares.

- AI-powered keyboards. In the case of Apple this seems an utter disaster. They've been at it for years, it seems no better now with 100x the HW than it was five years ago, and I think everyone hates it. Not sure what's going on here.

Maybe it's just a bad UI for indicating that the "recognition" is tentative and may be revised as you go further?

Maybe the model is (not quite, but almost entirely) single-word based rather than grammar and semantic based?

Maybe the model simply does not learn, ever, from how I write?

Maybe the model is too much trained by the actual writing of cretins and illiterates, and tries to force my language down to that level?

Regardless, it's just terrible.

What's this like in Google world? no "AI"-powered keyboards?, or they exist and are hated? or they exist and work really well?

Finally we have language.

Translation seems to have crossed into "good enough" territory. I just compared Chinese->English for both Apple and Google and while both were good enough, neither was yet at fluent level. (Honestly I was impressed at the Apple quality which I rate as notably better than Google -- not what I expected!)

I've not yet had occasion to test Apple in translating images; when I tried this with Google, last time maybe 4 yrs ago, it worked but pretty terribly. The translation itself kept changing, like there was no intelligence being applied to use the "persistence" fact that the image was always of the same sign or item in a shop or whatever; and the presentation of the image, trying to overlay the original text and match font/size/style was so hit or miss as to be distracting.

Beyond translation we have semantic tasks (most obviously in the form of asking Siri/Google "knowledge" questions). I'm not interested in "which is a more useful assistant" type comparisons, rather which does a better job of faking semantic knowledge. Anecdotally Google is far ahead here, Alexa somewhat behind, and Apple even worse than Alexa; but I'm not sure those "rate the assistant" tests really get at what I am after. I'm more interested in the sorts of tests where you feed the AI a little story then ask it "common sense" questions, or related tasks like smart text summarization. At this level of language sophistication, everybody seems to be hopeless apart from huge experimental models.

So to recalibrate:

Google (and Apple, and QC) are putting lots of AI compute onto their SoCs. Where is it used, and how does it help?

Vision and video are, I think clear answers and we know what's happening there.

Audio (recognition and synth) are less clear because it's not as clear what's done locally and what's shipped off to a server. But quality has clearly become a lot better, and at least some of that I think happens locally.

Translation I'm extremely unclear how much happens locally vs remotely.

And semantics/content/language (even at just the basic smart secretary level) seems hopeless, nothing like intelligent summaries of piles of text, or actually useful understanding of my interests. Recommendation systems, for example, seem utterly hopeless, no matter the field or the company.

So, eg, we have Tensor with the ability to run a small BERT-style model at higher performance than anyone else. Do we have ways today in which that is used? Ways in which it will be used in future that aren't gimmicks? (For example there was supposed to be that thing with Google answering the phone and taking orders or whatever it was doing, but that seems to have vanished without a trace.)

As I said, none of this is supposed to be confrontational. I just want a feel for various aspects of the landscape today -- who's good at what? are certain skills limited by lack of inference or by model size? what are surprising successes and failures?

dotjaz - Tuesday, November 2, 2021 - link

" but I do think it’s likely that at the time of design of the chip, Samsung didn’t have newer IP ready for integration"Come on. Even A77 was ready wayyyy before G78 and X1, how is it even remotely possible to have A76 not by choice?

Andrei Frumusanu - Wednesday, November 3, 2021 - link

Samsung never used A77.anonym - Sunday, November 7, 2021 - link

Exynos 980 uses Cortex-A77