Google's Tensor inside of Pixel 6, Pixel 6 Pro: A Look into Performance & Efficiency

by Andrei Frumusanu on November 2, 2021 8:00 AM EST- Posted in

- Mobile

- Smartphones

- SoCs

- Pixel 6

- Pixel 6 Pro

- Google Tensor

CPU Performance & Power

On the CPU side of things, the Tensor SoC, as we discussed, does have some larger configuration differences to what we’ve seen on the Exynos 2100, and is actually more similar to the Snapdragon 888 in that regard, at least from the view of a single Cortex-X1 cores. Having double the L2 cache, however being clocked 3.7%, or 110MHz lower, the Tensor and the Exynos should perform somewhat similarly, but dependent on the workload. The Snapdragon 888 showcases much better memory latency, so let’s also see if that actually plays out as such in the workloads.

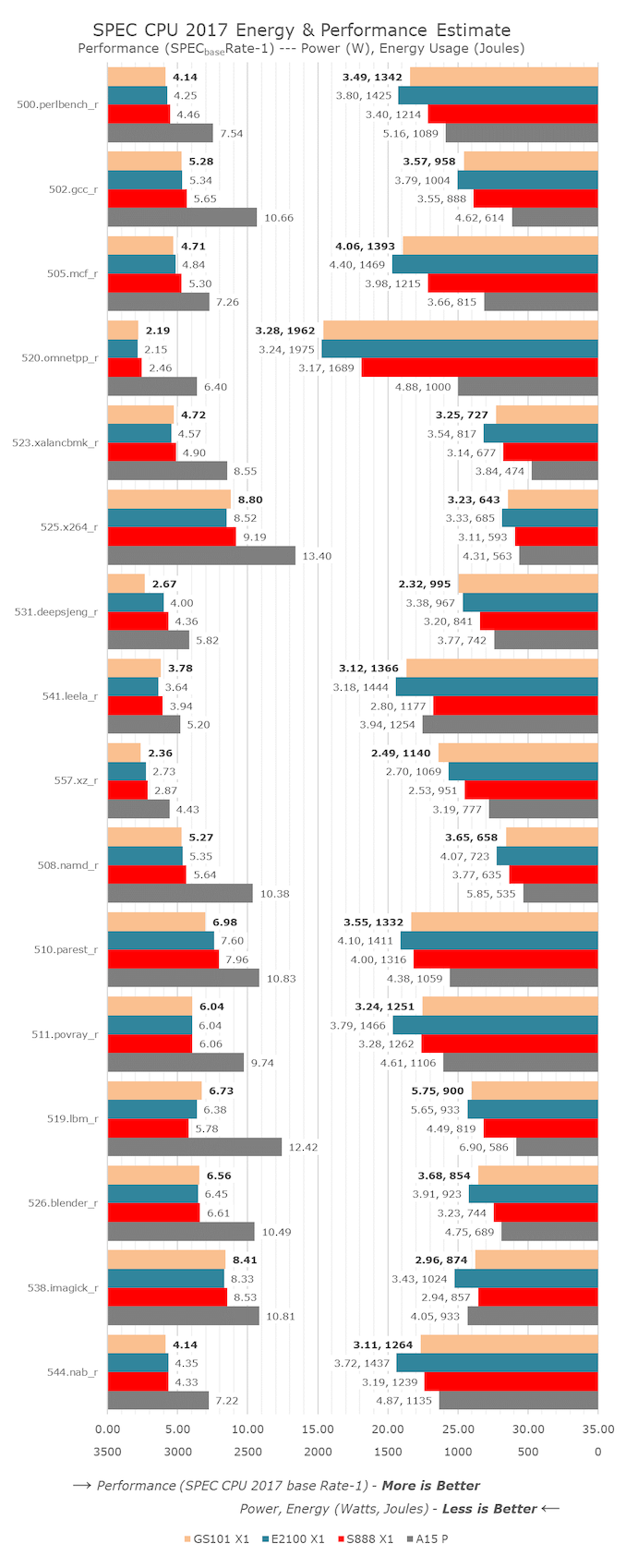

In the individual subtests in the SPEC suite, the Tensor fares well and at first glance isn’t all too different from the other two competitor SoCs, albeit there are changes, and there are some oddities in the performance metrics.

Pure memory latency workloads as expected seem to be a weakness of the chip (within what one call weakness given the small differences between the different chips). 505.mcf_r falls behind the Exynos 2100 by a small amount, the doubled L2 cache should have made more of a difference here in my expectations, also 502.gcc_r should have seen larger benefits but they fail to materialise. 519.lbm_r is bandwidth hungry and here it seems the chip does have a slight advantage, but power is still extremely high and pretty much in line with the Exynos 2100, quite higher than the Snapdragon 888.

531.deepsjeng is extremely low – I’ve seen this behaviour in another SoC, the Dimensity 1200 inside the Xiaomi 11T, and this was due to the memory controllers and DRAM running slower than intended. I think we’re seeing the same characteristic here with the Tensor as its way of controlling the memory controller frequency via CPU memory stall counters doesn’t seem to be working well in this workload. 557.xz_r is also below expectations, being 18% slower than the Snapdragon 888, and ending up using also more energy than both Exynos and Snapdragon. I remember ex-Arm’s Mike Filippo once saying that every single clock cycle the core is wasting on waiting on memory has bad effects on performance and efficiency and it seems that’s what’s happening here with the Tensor and the way it controls memory.

In more execution bound workloads, in the int suite the Tensor does well in 525.x264 which I think is due to the larger L2. On the FP suite, we’re seeing some weird results, especially on the power side. 511.povray appears to be using a non-significant amount lesser power than the Exynos 2100 even though performance is identical. 538.imagick also shows much less power usage on the part of the Tensor, at similar performance. Povray might benefit from the larger L2 and lower operating frequency (less voltage, more efficiency), but I can’t really explain the imagick result – in general the Tensor SoC uses quite less power in all the FP workloads compared to the Exynos, while this difference isn’t as great in the INT workloads. Possibly the X1 cores have some better physical implementation on the Tensor chip which reduces the FP power.

In the aggregate scores, the Tensor / GS101 lands slightly worse in performance than the Exynos 2100, and lags behind the Snapdragon 888 by a more notable 12.2% margin, all whilst consuming 13.8% more energy due to completing the task slower. The performance deficit against the Snapdragon should really only be 1.4% - or a 40MHz difference, so I’m attributing the loss here just to the way Google runs their memory, or maybe also to possible real latency disadvantages of the SoC fabric. In SPECfp, which is more memory bandwidth sensitive (at least in the full suite, less so in our C/C++ subset), the Tensor SoC roughly matches the Snapdragon and Exynos in performance, while power and efficiency is closer to the Snapdragon, using 11.5% less power than the Exynos, and thus being more efficient here.

One issue that I encountered with the Tensor, that marks it being extremely similar in behaviour to the Exynos 2100, is throttling on the X1 cores. Notably, the Exynos chip had issues running its cores at their peak freq in active cooling under room temperature (~23°C) – the Snapdragon 888 had no such issues. I’m seeing similar behaviour on the Google Tensor’s X1 cores, albeit not as severe. The phone notably required sub-ambient cooling (I tested at 11°C) to get sustained peak frequencies, scoring 5-9% better, particularly on the FP subtests.

I’m skipping over the detailed A76 and A55 subscores of the Tensor as it’s not that interesting, however the aggregate scores are something we must discuss. As alluded to in the introduction, Google’s choice of using an A76 in the chip seemed extremely hard to justify, and the practical results we’re seeing the testing pretty much confirm our bad expectations of this CPU. The Tensor is running the A76 at 2.25GHz. The most similar data-point in the chart is the 2.5GHz A76 cores of the Exynos 990 – we have to remember this was an 7LPP SoC while the Tensor is a 5LPE design like the Eynos 2100 and Snapdraogn 888.

The Tensor’s A76 ends up more efficient than the Exynos 990’s – would would hope this to be the case, however when looking at the Snapdragon 888’s A78 cores which perform a whopping 46% better while using less energy to do so, it makes the Tensor’s A76 mid-cores look extremely bad. The IPC difference between the two chips is indeed around 34%, which is in line with the microarchitectural gap between the A76 and A78. The Tensor’s cores use a little bit less absolute power, but if this was Google top priority, they could have simply clocked a hypothetical A78 lower as well, and still ended up with a more performant and more efficient CPU setup. All in all, we didn’t understand why Google chose A76’s, as all the results end up expectedly bad, with the only explanation simply being that maybe Google just didn’t have a choice here, and just took whatever Samsung could implement.

On the side of the Cortex-A55 cores, things also aren’t looking fantastic for the Tensor SoC. The cores do end up performing the equally clocked A55’s of the Snapdragon 888 by 11% - maybe due to the faster L3 access, or access to the chip’s SLC, however efficiency here just isn’t good, as it uses almost double the power, and is more characteristic of the higher power levels of the Exynos chips’ A55 cores. It’s here where I come back to say that what makes a SoC from one vendor different to the SoC from another is the very foundations and fabric design - for the low-power A55 cores of the Tensor, the architecture of the SoC encounters the same issues of being overshadowed in system power, same as we see on Exynos chips, ending up in power efficiency that’s actually quite worse than the same chips own A76 cores, and much worse than the Snapdragon 888. MediaTek’s Dimensity 1200 even goes further in operating their chip in seemingly the most efficient way for their A55 cores, not to mention Apple’s SoCs.

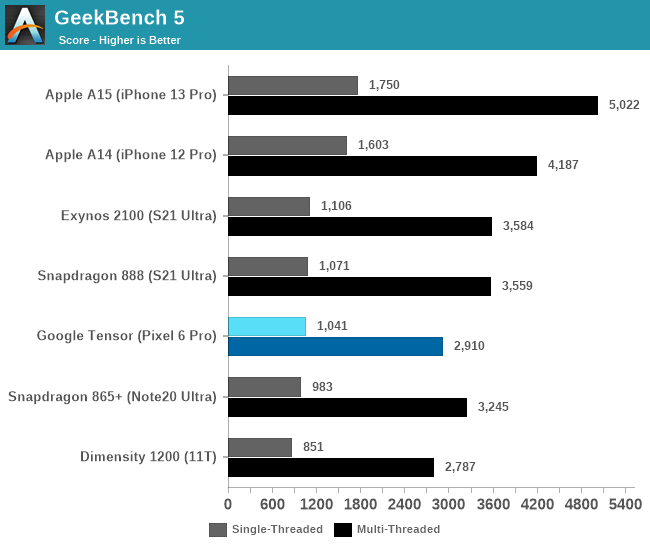

While we don’t run multi-threaded SPEC on phones, we can revert back to GeekBench 5 which serves the purpose very well.

Although the Google Tensor has double as many X1 cores as the other Android SoCs, the fact that the Cortex-A76 cores underperform by such a larger degree the middle cores of the competition, means that the total sum of MT performance of the chip is lesser than that of the competition.

Overall, the Google Tensor’s CPU setup, performance, and efficiency is a mixed bag. The two X1 cores of the chip end up slightly slower than the competition, and efficiency is most of the time in line with the Exynos 2100’s X1 cores – sometimes keeping up with the Snapdragon 888 in some workloads. The Cortex-A76 middle cores of the chip in my view make no sense, as their performance and energy efficiency just aren’t up to date with 2021 designs. Finally, the A55 behavioural characteristic showcases that this chip is very much related to Samsung’s Exynos SoCs, falling behind in efficiency compared to how Qualcomm or MediaTek are able to operate their SoCs.

108 Comments

View All Comments

Wrs - Wednesday, November 3, 2021 - link

It’s Double data rateNaturalViolence - Wednesday, December 1, 2021 - link

So you're saying the data rate is actually 6400MHz? LPDDR5 doesn't support that. Only regular DDR5 does.Eifel234 - Wednesday, November 3, 2021 - link

I've had the pixel 6 pro for a week now and I have to say it's amazing. I don't care what the synthetic benchmarks say about the chip. It's crazy responsive and I get through a day easily with heavy usage on the battery. At a certain point extra CPU/gpu power doesn't get you anywhere unless your an extreme phone gamer or trying to edit/render videos both of which you should really just do on a computer anyway. What I care mostly about is how fast my apps are opening and how fast the UI is. Theres a video comparison on YouTube of the same apps opening on the iPhone 13 max and the p6 pro and you know what the p6 pro wins handily at loading up many commonly used apps and even some games. Regarding the battery life, I expect to charge my phone nightly so I really don't care if another phone can get me a few more hours of usage after an entire day. I can get 6 hours of SOT and 18 hours unplugged on the battery. More than enough.Lavkesh - Thursday, November 11, 2021 - link

Well that would be true if iOS apps were the same as Android apps. In the review of A15, it was called out how Android AAA games such as Genshin Impact were missing visual effects altogether which were basically present in iOS. These app opening tests are pretty obtuse in my opinion and it checks out as well. For a more meaningful comparison, have a look at this and how badly this so called google soc is spanked by A15!Here's Exynos 2100 vs Google Pixel 6

https://www.youtube.com/watch?v=iDjzPPtC4kU&t=...

Here's Exynos 2100 vs iPhone

https://www.youtube.com/watch?v=U9A91bnVBU4

Arbie - Friday, November 5, 2021 - link

No earphone jack, no sale.JoeDuarte - Saturday, November 6, 2021 - link

This piece has been up for three days, and there are still tons of typos and errors on every page? How is this happening? Why doesn't AnandTech maintain normal standards for publishers? I can't imagine publishing this piece without reading it. And after publishing it, I'd read it again – there's no way I wouldn't catch the typos and errors here. Word would catch many of them, so this is just annoying."...however it’s only 21% faster than the Exynos 2100, not exactly what we’d expect from 21% more cores."

The error above is substantive, and undercuts the meaning of the sentence. Readers will immediately know something is wrong, and will have to go back to find the correct figure, assuming anything at AnandTech is correct.

"...would would hope this to be the case."

That's great. How do they not notice an error like that? It's practically flashing at you. This is just so unprofessional and junky. And there are a lot more of these. It was too annoying to keep reading, so I quit.

ChrisGX - Monday, November 8, 2021 - link

Has Vulkan performance improved with Android 12? That is a serious question. There has been some strange reporting and punditry about the place that seems intent on strongly promoting the idea that the Tensor Mali GPU is endowed with oodles and oodles of usable GPU compute performance.In order to make their case these pundits offer construals of reported benchmark scores of Tensor that appear to muddle fact and fiction. A recent update of Geekbench (5.4.3), for instance, in the view of these pundits, corrects a problem with Geekbench that caused it to understate Vulkan scores on Tensor. So far as I can tell, Primate Labs hasn't made any admission about such a basic flaw in their benchmark software, that needed to be (and has been) corrected, however. The changes in Geekbench 5.4.3, on the contrary, seem to be to improve stability.

I am hoping that there is a more sober explanation for the recent jump in Vulkan scores (assuming they aren't fakes) than these odd accounts that seem intent on defending Tensor from all criticism including criticism supported by careful benchmarking.

Of course, if Vulkan performance has indeed improved on ARM SoCs, then that improvement will also show up in benchmarks other than Geekbench. So, this is something that benchmarks can confirm or disprove.

ChrisGX - Monday, November 8, 2021 - link

The odd accounts that I believe have muddled fact and fiction are linked here:https://chromeunboxed.com/update-geekbench-pixel-6...

https://mobile.twitter.com/SomeGadgetGuy/status/14...