Solidigm Announces D5-P5336: 64 TB-Class Data Center SSD Sets NVMe Capacity Records

by Ganesh T S on July 20, 2023 11:00 AM EST- Posted in

- Storage

- SSDs

- Datacenter

- Enterprise SSDs

- QLC

- Solidigm

Advancements in flash technology have come as a boon to data centers. Increasing layer counts coupled with better vendor confidence in triple-level (TLC) and quad-level cells (QLC) have contributed to top-line SSD capacities essentially doubling every few years. Data centers looking to optimize storage capacity on a per-rack basis are finding these top-tier SSDs to be an economically prudent investment from a TCO perspective.

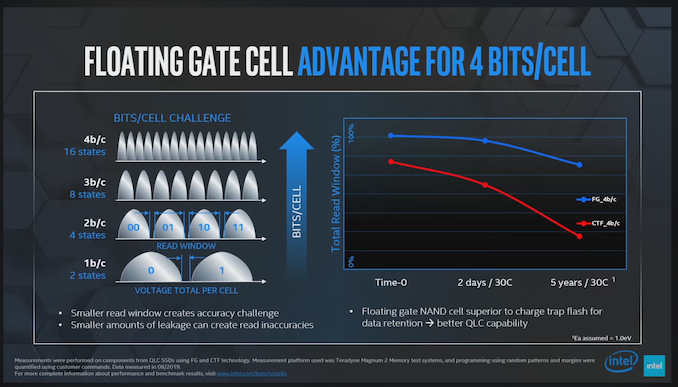

Solidigm was one of the first vendors to introduce a 32 TB-class enterprise SSD a few years back. The D5-P5316 utilized Solidigm's 144L 3D QLC NAND. The company has been extremely bullish on QLC SSDs in the data center. Compared to other flash vendors, the company has continued to use a floating gate cell architecture while others moved on to charge trap configurations. Floating gates retain programmed voltage levels for a longer duration compared to charge trap (ensuring that the read window is much longer without having to 'refresh' the cell). The tighter voltage level retaining capability of the NAND architecture has served Solidigm well in bringing QLC SSDs to the enterprise market.

Source: The Advantages of Floating Gate Technology (YouTube)

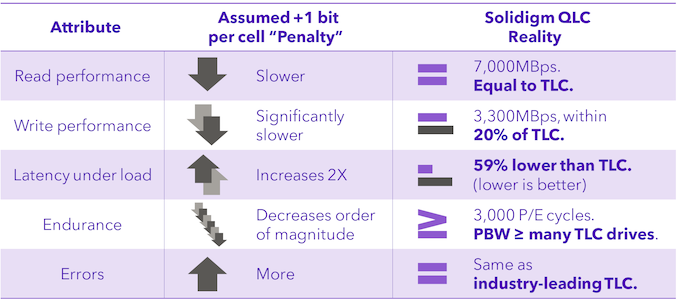

Solidigm is claiming that their 192L 3D QLC is extremely competitive against TLC NAND from its competitors that are currently in the market (read, Samsung's 136L 6th Gen. V-NAND and Micron's 176L 3D TLC).

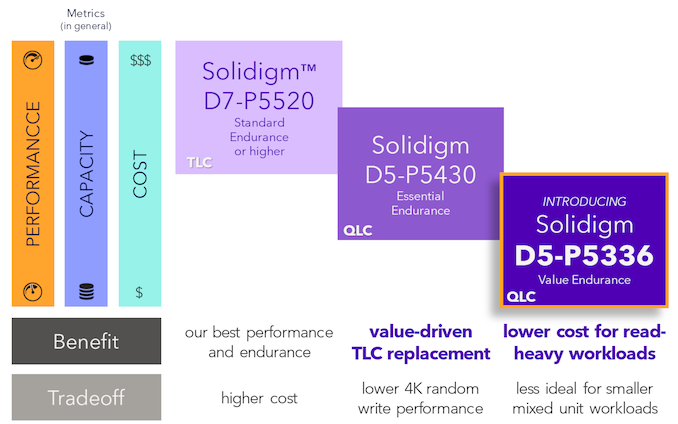

Solidigm segments their QLC data center SSDs into the 'Essential Endurance' and 'Value Endurance' lines. Back in May, the company introduced the D5-P5430 as a drop-in replacement for TLC workloads. At that time, the company had hinted at a new 'Value Endurance' SSD based on their fourth generation QLC flash in the second half of the year. The D5-P5336 announced recently is the company's latest and greatest in the 'Value Endurance' line.

Solidigm's 2023 Data Center SSD Flagships by Market Segment

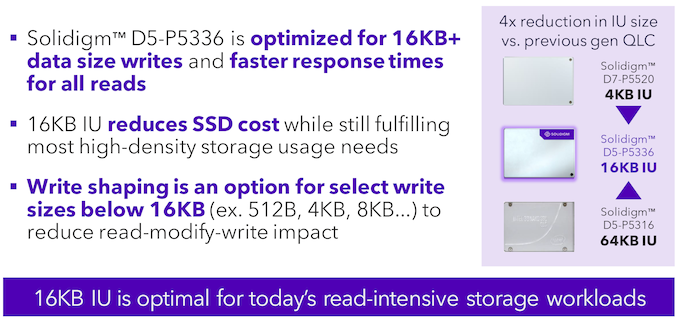

The D5-P5316 used a 64KB indirection unit (IU) (compared to the 4KB used in normal TLC data center SSDs). While endurance and speeds were acceptable for specific types of workloads that could avoid sub-64KB writes, Solidigm has decided to improve matters by opting for a 16KB IU in the D5-P5336.

Thanks to the increased layer count, Solidigm is able to offer the D5-P5336 in capacities up to 61.44 TB. This takes the crown for the highest capacity in a single NVMe drive, allowing a single 1U server with 32 E1.L versions to hit 2 PB. For a 100 PB solution, Solidigm claims up to 17% lower TCO against the best capacity play from its competition (after considering drive and server count as well as total power consumption).

| Solidigm D5-P5336 NVMe SSD Specifications | ||||

| Aspect | Solidigm D5-P5336 | |||

| Form Factor | 2.5" 15mm U.2 / 7.5mm E3.S / 9.5mm E1.L | |||

| Interface, Protocol | PCIe 4.0 x4 NVMe 1.4c | |||

| Capacities | U.2 7.68 TB, 15.36 TB, 30.72 TB, 61.44 TB |

E3.S 7.68 TB, 15.36 TB, 30.72 TB |

E1.L 15.36 TB, 30.72 TB, 61.44 TB |

|

| 3D NAND Flash | Solidigm 192L 3D QLC | |||

| Sequential Performance (GB/s) | 128KB Reads @ QD 128 | 7.0 | ||

| 128KB Writes @ QD 128 | 3.3 | |||

| Random Access (IOPS) | 4KB Reads @ QD 256 | 1005K | ||

| 16KB Writes @ QD 256 | 43K | |||

| Latency (Typical) (us) | 4KB Reads @ QD 1 | ?? | ||

| 4KB Writes @ QD 1 | ?? | |||

| Power Draw (Watts) | 128KB Sequential Read | ?? | ||

| 128KB Sequential Write | 25.0 | |||

| 4KB Random Read | ?? | |||

| 4KB Random Write | ?? | |||

| Idle | 5.0 | |||

| Endurance (DWPD) | 100% 128KB Sequential Writes | ?? | ||

| 100% 16KB Random Write | 0.42 (7.68 TB) to 0.58 (61.44 TB) | |||

| Warranty | 5 years | |||

Note that Solidigm is stressing endurance and performance numbers for IU-aligned workloads. Many of the interesting aspects are not yet known as the product brief is still in progress.

Ultimately, the race for the capacity crown comes with tradeoffs. Similar to hard drives adopting shingled-magnetic recording (SMR) to eke out extra capacity at the cost of performance for many different workload types, Solidigm is adopting a 16KB IU with QLC NAND optimized for read-intensive applications. Given the massive capacity per SSD, we suspect many data centers may find it perfectly acceptable (at least, endurance-wise) to use it in other workloads where storage density requirements matter more than write performance.

18 Comments

View All Comments

linuxgeex - Thursday, July 20, 2023 - link

QLC has been getting a lot of misplaced hate for the last 3 years. There's been plenty of well engineered QLC products that support at or near 1000 P/E cycles, which was the high tide mark for TLC for a long time. Like any other tool, QLC can be misused. Blaming the tool is a sign of a weak mind. Linus Sebastian gave a great example of that yesterday, lol. The LTT screwdriver can be used as a shiv. Or it can be used to drive screws. How it gets used is up to whoever has it in their hands. Don't blame the tool.29a - Thursday, July 20, 2023 - link

I have a 2 TB 660p that is almost 5 years old that's still running strong.bill.rookard - Thursday, July 20, 2023 - link

I have a Crucial M4 64GB which is currently at 101,390 POH, with 4% of life reported as 'used'. As of now it had one single erase error... Wear leveling count is 134, and 125 power cycles. Pretty durable little SSD.anthony111 - Friday, July 21, 2023 - link

And that’s a client model, vs this enterprise. Many people also don’t realize that the user can adjust the overprovisioning slider up and down to trade off space for endurance. A modest increase in overprovisioning can easily double endurance.One may see, for example, a 1TB 1DWPD drive but an 800GB 3DWPD. They may well be the same hardware with different overprovisioning.

ballsystemlord - Friday, July 21, 2023 - link

I helped another gentlemen who was experiencing problem with his 660p drive. It had ~50% of it's write life span left and it was throwing lots of errors which he'd try to read or write data.So although some of you have had great experiences, and certainly write-once ROM is QLC's ideal use case, that doesn't change the fact that these drives have a tendency to be unreliable due to the additional states needed to store data.

But in general, QLC's main problems are it's slow write rate, once you run out of SLC cache, thus requiring you to way over-provision your storage, and QLC's lack of endurance compared to modern TLC designs which can get >= 1200 TBW per TB of capacity.

anthony111 - Friday, July 21, 2023 - link

Sophistry. The number of Vts impacts endurance not reliability. And 3000 PE cycles here is impressive. Endurance is FUD, and don’t compare enterprise drives to value client SKUs.ballsystemlord - Saturday, July 22, 2023 - link

By no means is sophistry my goal nor do I understand how you even thought that's what I was doing. Everything I wrote, excluding the poor end-users story can be verified by reading up on SSD info.Also, I was responding to the comments above, not making an argument about server SSDs, though certainly QLC is QLC no matter where it is placed.

Samus - Friday, July 21, 2023 - link

Yep. I have installed numerous 670p SSD's as primary\boot drives in dozens of office PC's and NAS's. The most wear I've seen on a QLC SSD was a 665p and it was at 91% still after years of use. Strangely it had over 100TBW and is a 1GB model so it should be closer to 80% but who knows how the wear calculations work it isn't just a fixed estimate based on TBW - it probably factors in reserve area, SLC cache shifting, etc.PeachNCream - Friday, July 21, 2023 - link

If the life span estimates originate from manufacturer supplied software then there may be something of a bias in presentation of useful remaining durability and algorithmic functionality embedded that plays a bit with the numbers to offer a placebo-like effect to end users prone to bother with installing and running said software. Vendors are well aware that those who would install said software are enthusiasts that are VERY easy to dupe and like little bar graphs and numbers while people with a bit more between the ears are less interested in claims in software for anecdotal individual use cases and more interested in statistical failure rates which are simply not readily available from a credible source across enough QLC products yet. In short, it's a bit too early to tell with most QLC products and there is a lack of data which manufacturers are banking on as the status quo for the foreseeable future while they reap the benefits of people singing single-instance praise which they tend to do more often than make public admissions of their own foolish purchases or data loss resulting from drive failure. Human nature exploited through marketing is powerful and enough people understand how to exploit it while not enough people realise it's happening to them.29a - Friday, July 21, 2023 - link

I'm guessing you're one of the people who have been trash talking QLC for the last 5 years.